-

Story

-

Resolution: Done

-

Normal

-

None

-

Incidents & Support

-

2

-

False

-

-

False

-

None

-

Unset

-

None

-

-

-

-

HCC Framework Sprint 53

https://redhat-internal.slack.com/archives/C022YV4E0NA/p1771536006799769

This particular error seems like a network problem where the request didn't make it to akamai/reverse-proxy, but it had me digging into other parts of the system. Details

Looking into the request, the asset was successfully uploaded to s3 at 9:48 am. User experienced error around 4:20 pm (EST).

There are no 4xx errors in the splunk logs. There are 304s from successful caching, many 200s around the time, and a few 302s around the time it was uploaded. https://rhcorporate.splunkcloud.com/en-US/app/search/search?q=search%20index%3Drh_akamai%20console.stage.redhat.com%2070337.f2233b9b1c51971f.js%20%7C%20spath%20statusCode%20%7C%20search%20statusCode!%3D200%7C%20spath%20statusCode%20%7C%20search%20statusCode!%3D304&display.page.search.mode=verbose&dispatch.sample_ratio=1&workload_pool=&earliest=1771509600&latest=1771513200&display.page.search.tab=events&display.general.type=events&sid=1771541631.304499_68EF55F8-FACF-4B05-8AE9-5C88C387EB9D

Can't find the error in sentry (memory serves the events aren't uploaded there).

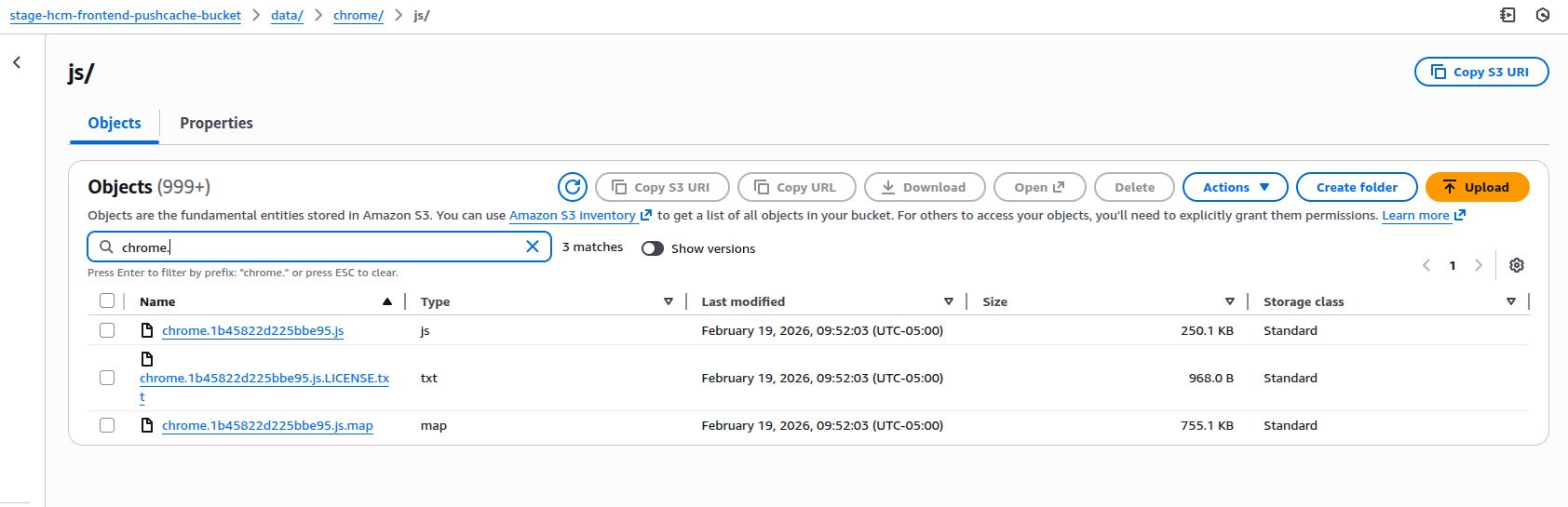

Onto a possibly real problem, I see some assets get 403s, about 100 every few hours. These assets should exist and are a part of the previous builds. In s3 for chrome.{blob}.js, an important file in running the app, there is only the most recent entry. We should have multiple versions of this file. from previous builds. There are multiple versions of chrome-root.{blob}.js however, so something seems wrong with the previous build clearing mechanism. (I believe its by prefix, so maybe we should exclude files with a non-numeric prefix from being cleared this way)