-

Story

-

Resolution: Obsolete

-

Normal

-

None

-

None

-

Inference, RHOAI

-

False

-

False

-

User Story:

As a PSAP engineer, I want to prepare a POC showing the usefulness of in-place Pod resource resize published under feature gate in OCP 4.14.

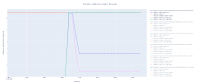

I'm thinking about this pattern that I observed in the memory usage:

We see that between 20:30 and 20:35, the model was being loaded in the GPU memory, and the RAM memory consumption spiked above 10GB, and then went down to maybe 1 GB. So with this pattern and the current immutable memory request/limit setting, the memory request must be 11GB, otherwise the model loading might fail.

With in-place pod resource resize, can can change it to 2GB when the Pod reports as ready for serving requests.

The POC would consist in instantiating multiple models concurrently, showing that without setting request=11GB, they'll fail randomly, and with in-place resize, they dont' fail anymore and better utilize the available resources.