-

Task

-

Resolution: Done

-

Critical

-

None

-

None

VolSync Performance:

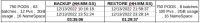

Just using VolSync let’s benchmark a large number of volumes from OpenShift Cluster A to OpenShift Cluster B using minio s3

- VolSync speed to sync from A to B is the minimum required time for OADP datamover. OADP will create additional overhead and time.

- Note the conversation with PM and John Strunk here

John Strunk, Yesterday 2:02 PM In theory, I'd expect VolSync to be able to handle it, but we've never tried anything at this scale... I don't have the resources to try it. It should parallelize well, so if they have enough resources in their cluster (CPU, memory, network, storage), it should just work. I don't really know how to size it though w/o just trying it

- Given requirements like ( not exact ) in Performance Criteria Specification

- What is the time required to sync 10 volumes

- What is the time required to sync 100 volumes

- If possible, fine if it isn’t, 600 volumes.

- Pranav’s volsync perf doc

- Does OAPD offer a choice between rclone and restic or just restic.

- NOPE.. just restic

Performance Criteria Specification

ocp site A to ocp site B on minio (S3) PVs will be using a mix of CephFS and CephRBD Average, min, max size of the representative PV’s min = 100Mi, max = 500Gi, average = 5.56 GB Type of data ( how much does this matter for perf? ) *⅔ file, ⅓ block* Network: 10GB

VolSync Kick off Doc:

https://docs.google.com/document/d/1Z6J7xIqbMFTUFoq4uoochyDjfn70luspwAEwBpgMsxA/edit?hl=en&forcehl=1