-

Bug

-

Resolution: Done

-

Major

-

None

-

None

-

False

-

False

-

Undefined

-

In order to stream changes captured from multiple tables in the right order, the connector runs multiple queries at a time (one per CDC table) and reads their result in the LSN order (as of DBZ-40).

As of DBZ-1065, the connector uses adaptive response buffering. The SQL Server documentation on adaptive buffering recommends to:

Avoid executing more than one statement on the same connection simultaneously. Executing another statement before processing the results of the previous statement may cause the unprocessed results to be buffered into the application memory.

This is where it likely happens at the driver level.

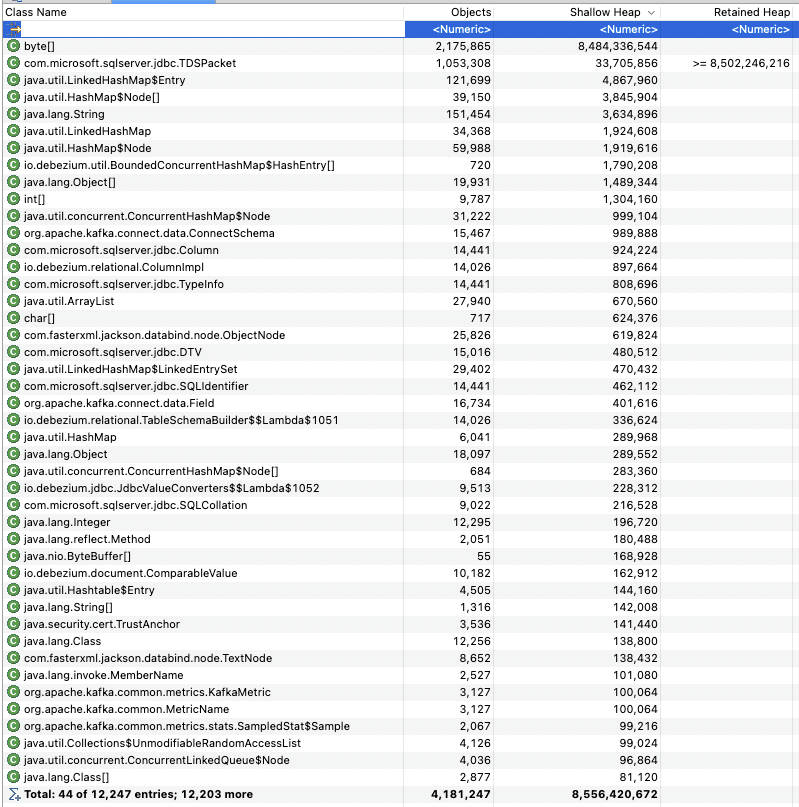

In case, if a connector captures changes from multiple tables and is significantly behind the source (has a lot of unprocessed CDC events), once it starts, it buffers all CDC data into memory resulting in abnormal memory usage:

If the available memory is insufficient, the connector will crash.

Potential workaround:

As a workaround, one could specify database.selectMethod=cursor in the connector configuration. This will make the server maintain a cursor for each result set on the server side instead of buffering on the client side. Although it may have its performance side-effects (ref).

Proposed solution:

Instead of requesting all the changes from the last processed to the last available, the connector could request changes in chunks:

- Using the data in the cdc.lsn_time_mapping table, find the LSN of the transaction that is N (configurable) transactions ahead from the last processed one.

- Use the LSN above as the max argument of cdc.fn_cdc_get_all_changes_<capture_instance>.

In the future, this improvement could be used to eliminate the need for a separate metadata connection. As the commit message implies, the metadata connection was introduced to avoid full result set buffering.

- is related to

-

DBZ-3992 SQL Server fails to read CDC events if there is a schema change ahead

-

- Closed

-