Details

-

Bug

-

Resolution: Not a Bug

-

Major

-

None

-

1.7.0.CR1

-

None

-

False

-

False

-

Description

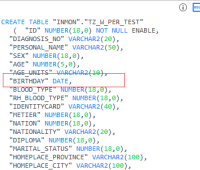

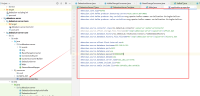

At first, we batch inserted 20000 pieces of data into the table, and found that topic recorded only 500 pieces of data, which was thought to be Kafka's loss of data. After troubleshooting, we found that it was not Kafka's problem, because when we inserted three pieces of data into the table, debezium recorded only one log in topic, and then we thought that Oracle did not record the log of our inserted data in redo, Logminer is used to parse the redo (current) log. It is found that the redo records all the changes of data we insert into the table. The last problem points to debezium. Although debezum does not know how to deal with it after logimer parsing, it seems that it is his problem at present,

In addition, when we execute the batch insert statements, we use the (insert into table select * from table) line format, which will cause problems. However, if we separate the batch insert statements, we use three insert into statements to insert data into the monitored table, and it is found that there will be no lack of records in topic